Recommendation Service

Overview

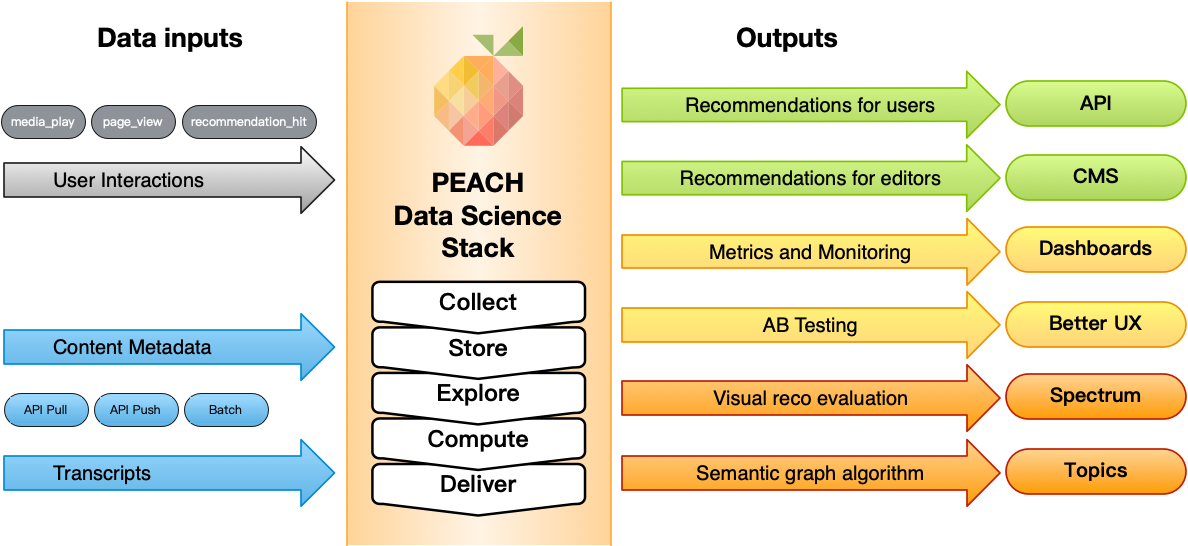

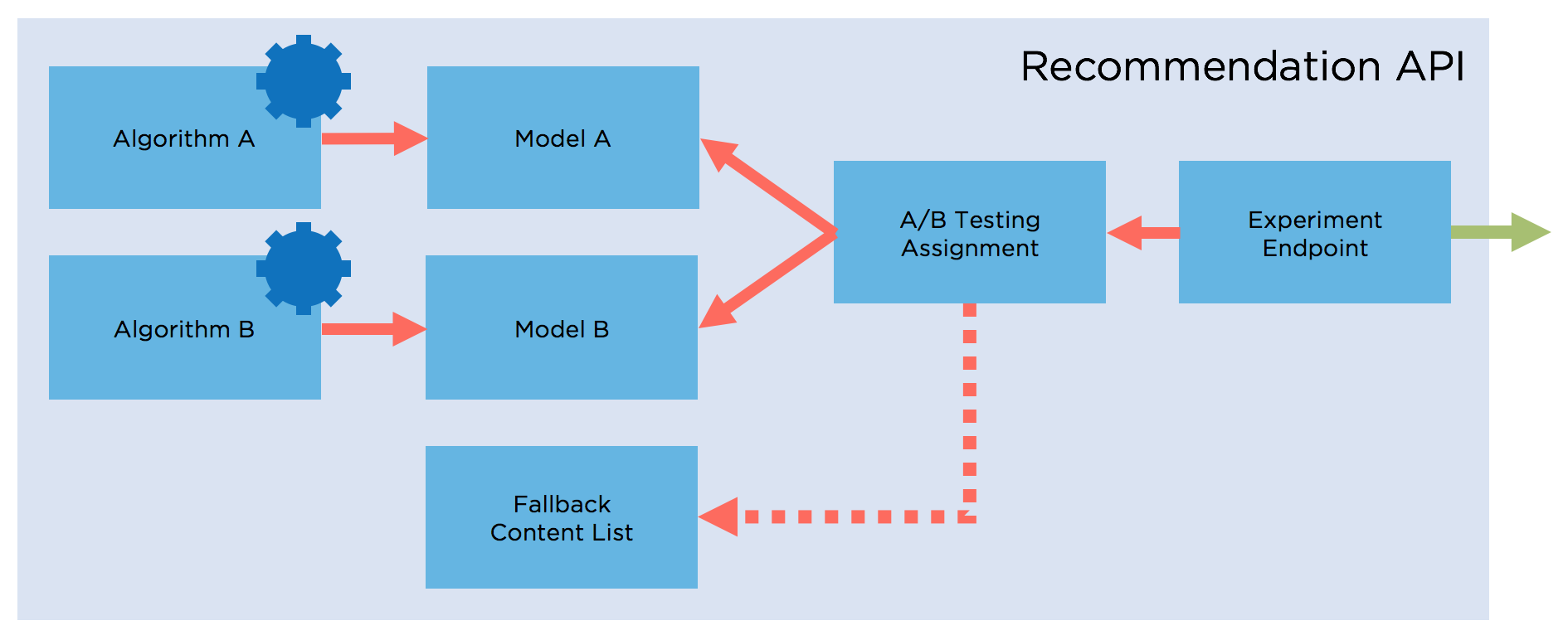

A technical overview diagram and more detailed technical explanations are available in the technical guides.

Define the goal

While this step might seem obvious, it is a very important aspect. You need to clearly define what you intend to provide to your users, and what you expect the benefits to be: - Lead the user to trending content, or surface old content again? - Favour a high number of clicks, or aim for more diversity? - Lead the user to related content when he is already engaged with something - Let the user discover new Programs - Provide a safe recommendation to children audience You can't improve what you cannot measure : When your goals are clear, and you can define when they are reached, you are on a better path for iterative improvement.

Data colllection and exploration

Providing recommendation to client is following the typical data science workflow. Depending on the use case, the algorithm is chosen, and appropriate data needs to be made available. This can be user generated events (playing content, visiting a page, reading an article...) as well as metadata describing the content (title, description, categories, transcripts,...).

The Data Scientists can then explore the data using the PEACH Lab to assess quality, and to understand how to design the algorithm to address the business case.

Recommendation algorithms

In an effort to provide recommendation to members already participating in PEACH, the team already devised a few algorithms used by the members. A non exhaustive list of algorithms that are already part of the Data Scientists Platform can be found at the Data Scientist's Algorithms page.

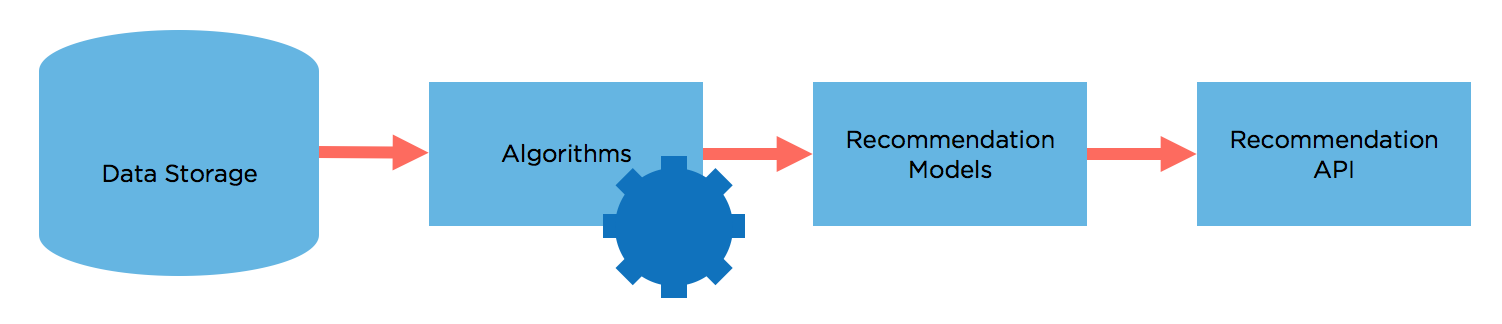

Processing & Distribution

Once an algorithm is devised, its recommendation model needs to be computed periodically using Tasks to include latest views and available content.

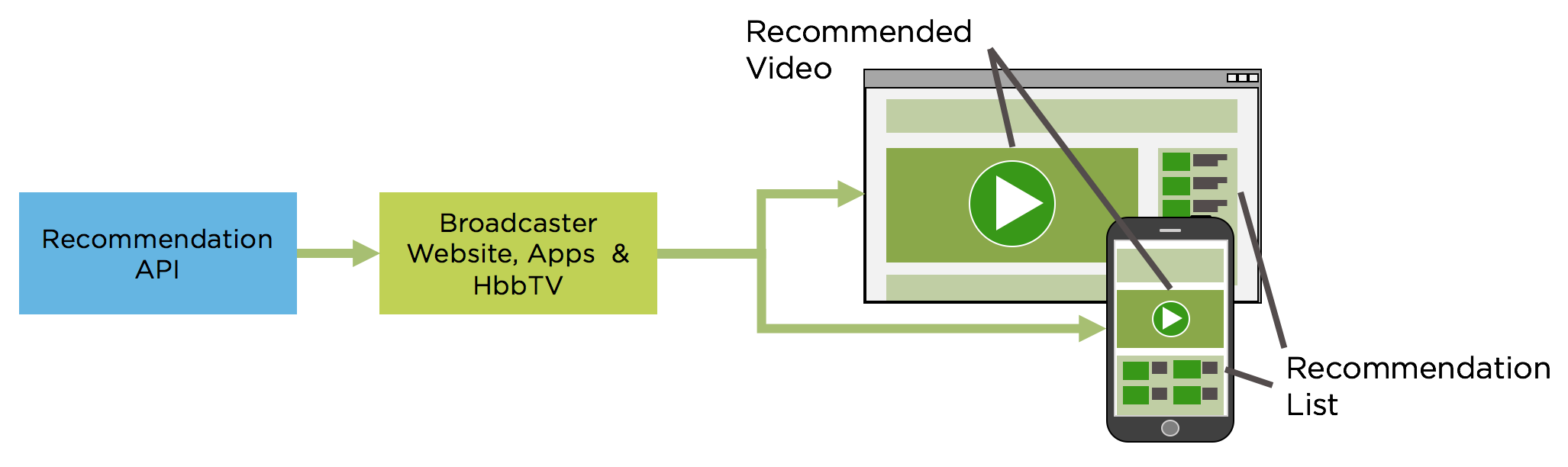

Recommendation is provided to clients by using models to compute recommendation, and distributed through REST Recommendation APIs

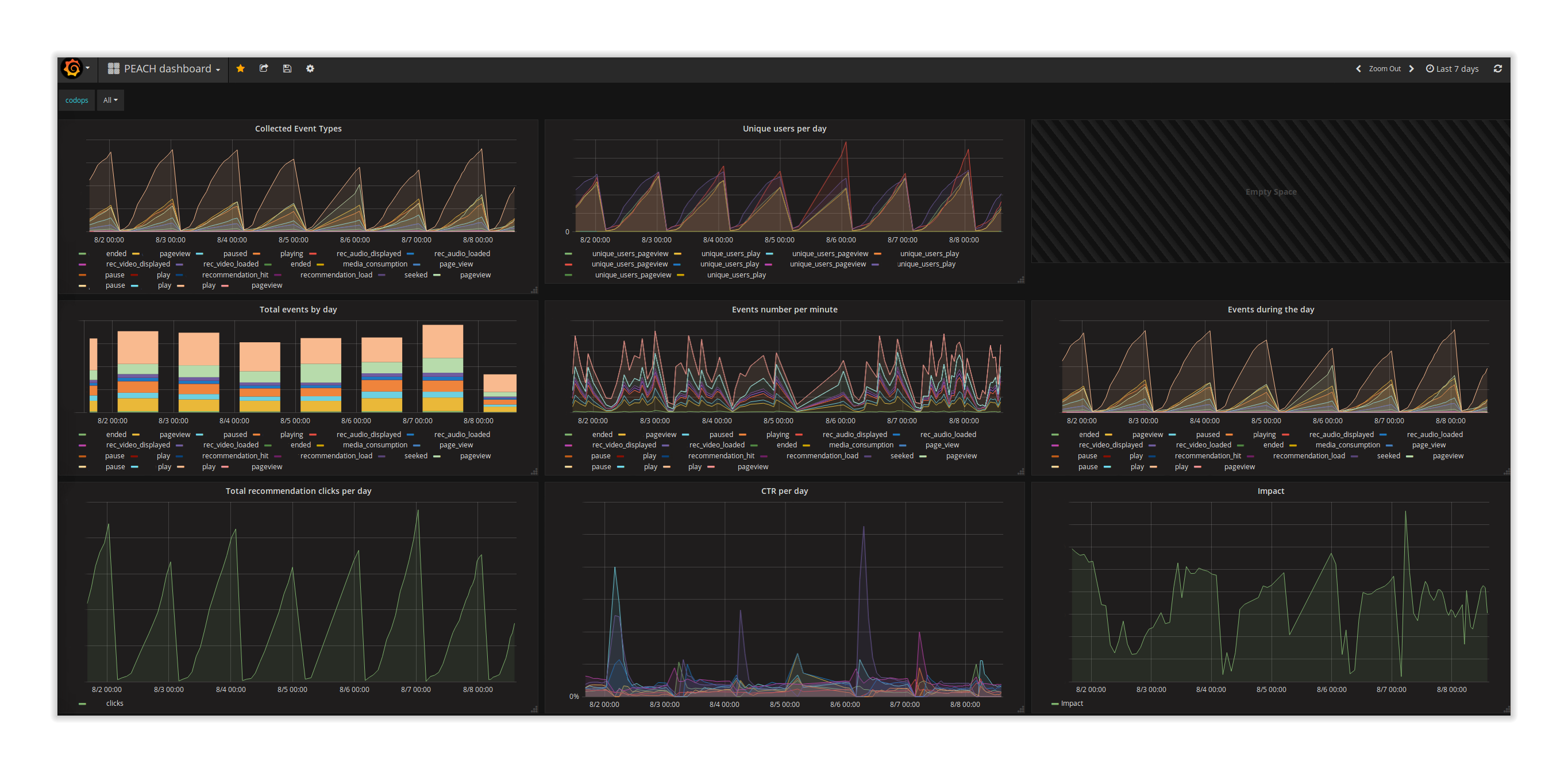

Evaluation and Monitoring

In order to improve and meet broadcasters goals in terms of recommendation impact, the platform provides an algorithm evaluation system, which enables a broadcaster to run A/B Testing experiments and see the results on a dashboard.

Understanding how people are reacting to recommended content and which content is consumed helps validate recommendation algorithm choices and parameters as well as the user experiment they are embedded into. The location, the layout and the format of the content recommended are key parameters, which will require different types of algorithms.

A/B testing enables broadcasters to experiment multiple options in parallel and make data-driven decisions. Any change in the algorithm or the user interaction can be measured. That information is aggregated into a configurable dashboard made available to the developers, data scientists and editorial teams in order to adapt the user experience, the algorithms and possibly the format of the content.